- Research platform

Sources of information

Data analysis

Actions

- Solutions

For whom

Problems / Issues

- Materials

Materials

- About us

About us

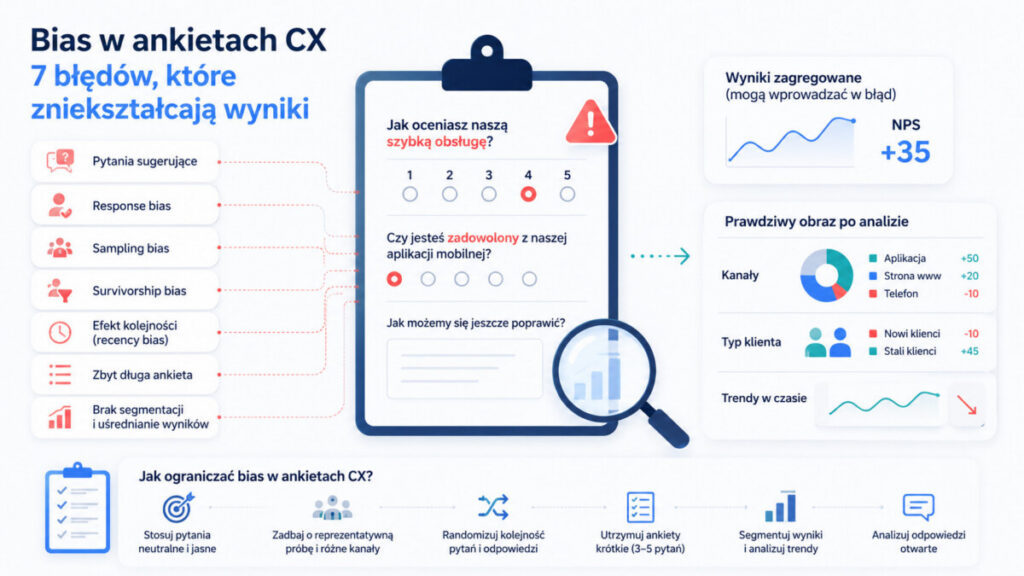

Even professional CX research is prone to bias, which can lead to erroneous conclusions and incorrect business decisions. Differences of 5-10 NPS points are often due not to real changes in customer experience, but to uncontrolled methodological errors.

What you need to know:

Modern CX platforms automatically catch 40-60% of errors, such as surveys that are too long or imbalanced in sampling.

In many organizations, NPS, CSAT and CES are treated as "hard" numbers, almost at the level of financial data. According to the Gartner 2025 report, 78% of Fortune 500 companies use NPS as a KPI for CEOs. In Poland, 65% of service sector companies base CX investment decisions on surveys, with VoC budgets exceeding PLN 500K per year.

The problem is that dashboard numbers are perceived as objective, although research decisions are hidden in the background: survey design, sampling, distribution method, data analysis.

Example: An e-commerce company changes its NPS invitation email from "please rate" to "share your opinion." It sees a 7 p.p. increase in NPS and celebrates success. Meanwhile, 40% of the new responses come from loyal customers - a classic sampling bias that masks a drop in satisfaction among new users.

In the following article, I explain what bias is in CX surveys and which 7 most common mistakes distort survey results.

Bias is a systematic error in survey research that causes NPS, CSAT or CES results to deviate from the actual customer experience. Unlike random "noise" (random fluctuations), bias directionally shifts results - for example, it systematically overstates NPS by 5-15 points.

The main sources of bias in CX research:

| Category | Examples |

|---|---|

| Survey design | Leading questions, erroneous scales |

| Sample selection | Sampling bias, survivorship bias |

| Respondents' behavior | Social desirability, acquiescence bias |

| Analytical decisions | Confirmation bias, analysis of averages only |

Errors in survey design lead to distorted results and faulty business decisions - even a well-designed survey can fall short if the survey objectives are unclear or the questions are ill-suited to the context. According to Deloitte CX Trends 2025, 45% of misplaced CX priorities are due precisely to bias, leading to a loss of 1-5% of revenue.

The purpose of this article is not to discourage survey research, but to show how to consciously design customer experience and data interpretation surveys to avoid mistakes.

The following section contains 7 subsections, each describing one type of error in the context of CX and VoC surveys. The errors are grouped thematically - from survey design, to sample, to respondent behavior, to data analysis. For each error, I point out a definition, impact on results, an example, and specific risk mitigation tips.

This is one of the most common errors in survey research. The question design error suggests answers through the way the question is asked - the respondent is guided to the "right" answer before choosing it.

Typical examples from CX surveys:

Suggestive questions can lead respondents to answer according to the researcher's expectations, which distorts survey results. Stilted language inflates ratings by 18-25% in online CX surveys. Suggestive questions can force respondents to be positive, which understates negative opinions.

Duplicate questions combine two threads in a single question, making it impossible to provide a fair assessment. If you ask "Was the service prompt and professional?" you don't know which attribute the customer is evaluating.

Best practices:

Response bias is a group of phenomena in which customers respond differently than they actually think. Errors in surveys can lead to social desirability bias or acquiescence bias, which artificially inflates results.

The most common types:

Example: Survey sent immediately after contact with a consultant - customer does not want to "report" on a particular person, so chooses higher service ratings. Consequences? Systematic overestimation of service quality and difficulty in catching real problems.

Ways to mitigate:

Sampling bias occurs when the sample of respondents does not represent the entire customer base. CX surveys often reach only those using specific channels, leaving out the rest.

Survivorship Bias is the tendency to focus on data from those who have succeeded, ignoring data from those who have not, leading to erroneous conclusions - in practice, this means no representative sample in marketing research. In CX, this means surveying only customers who "survived" the process - completed the purchase, made it to the end of the conversation.

Example: a survey of the bank's NPS only among those logged into online banking in the last 30 days shows 45. Inactive customers who have left for the competition have a real NPS of -15 - but their voice is not heard.

Practical steps:

Long surveys drastically reduce data quality. The longer the survey, the greater the risk of dropouts or cursory responses (satisficing). Each question beyond the fifth raises the dropout by 25%.

Consequences:

Non-response error occurs when a large proportion of customers ignore the survey - and length is the main reason for ignoring and one of the most common errors in the entire survey process.

Recommendations:

Survey design and scale structure itself can introduce bias. Inappropriate response scales, the absence of a neutral option, or the use of asymmetric scales force a positive rating. Restricting respondents from accurately determining the scale of a phenomenon leads to distorted results.

Recency Bias is the tendency to give more weight to the most recent data or the most recent options on the list ( 20% selection of recent responses).

Effects:

Best practices:

Looking only at the aggregate average ("our NPS is 35") without analyzing the distribution and segments of customers is a kind of analytical bias. Selection bias occurs when a data sample is selected in a way that does not reflect the entire target population, leading to erroneous conclusions.

Example: Average NPS for the entire base = 35. But:

Averaging masks the problem occurs in onboarding new customers - the most important segment for growth.

Practices:

Confirmation bias is the tendency to notice and interpret information in a way that confirms existing beliefs, which can lead to a distortion of reality. In CX, it manifests itself by looking for data that confirms preconceived hypotheses.

Example: NPS growth expectation syndrome after implementation of a new IVR. It focuses on positive changes in the young customer segment ( 8 p.p.), ignoring the decline in seniors (-12 p.p.) and in the telephone channel.

Confirmation bias can affect the entire process: survey design (questions for a thesis), choice of indicators, choice of comparison periods, method of presentation to the board.

Ways to mitigate:

The following list is a practical "checklist" for a CX manager to go through before launching any survey:

| Area | Checklist question |

|---|---|

| Language of questions | Are the questions neutral, without value-laden adjectives? |

| Length | Does the survey have only the necessary number of questions (3-5)? |

| Scales | Are the scales consistent, symmetrical and consistent with previous surveys? |

| Sample | Does the sample cover key segments and points of contact? |

| Piloting | Conducting a pilot survey with a small group helps identify shortcomings |

| Segmentation | Has segmentation and linkage to transactional data been planned? |

| Open-ended responses | Was text analysis (categorization, sentiment) planned? |

| Hypotheses | Did the team define the business questions in advance? |

| Clarity | Clarity of survey questions is key - avoid complex language |

Failure to test a survey before distribution can lead to problems, such as overly complicated questions not understood by respondents. Using complicated technical language leads to inaccurate customer responses.

The myth of "the more responses, the better" is particularly problematic in CX. A large sample can be subject to serious bias - we are accurately measuring something different than we think.

Small Sample Bias occurs when conclusions are drawn from too little data - in such situations, it's worth considering when not to conduct surveys at all, or replace them with other methods. But the inverse also does not guarantee the reliability of the results - 20,000 responses from mobile app users alone may be less valuable than 800 responses from a well-balanced sample including mobile, web and branches.

Data quality depends on:

Unclear survey questions can lead to misinterpretations and poor data quality, so it is important that questions be clear and direct. In mature VoC programs, "number of responses" is treated as ancillary - data quality indicators are at the center.

Bias in CX surveys is inevitable, but it can be managed. The 7 biases described are a "checklist" for regular review - from survey creation to data collection to analysis. High NPS and response rates alone are not enough without data quality analysis.

A mature approach combines:

You can take the first step right away: choose one survey currently in progress (e.g., NPS after a hotline contact) and check which of the described errors may be present in it. This is the basis for an informed survey that will ultimately provide reliable results and solid data for strategic decision-making.

"Ordinary" measurement error is random (noise), while bias is systematic and shifts results in a specific direction - for example, always overestimating NPS. Question order error affects responses later in the survey - an example of bias, not noise. Bias results from repeated survey decisions, not individual random mistakes by respondents. Prior questions can affect how a respondent answers the second question and subsequent questions.

No - a high NPS can coexist with severe sampling bias or suggestive questions. The quality of the survey is evidenced by the way respondents are recruited, the stability of results over time and between channels, the content of open-ended responses, and the link between NPS and actual behavior (churn, purchases). Only the combination of a high NPS and good methodology allows the indicator to be treated as a reliable source for identifying trends.

A minimum of once a year to audit key surveys (NPS, CSAT, CES), and additionally always when there are major changes in customer processes. Signals for an audit are unusual changes in results without a clear reason, or uneven participation of segments in the sample. Auditing is worth involving not only researchers, but also people from the operation who are familiar with real processes - this will help lead to better results and avoid false conclusions.

Yes - open-ended responses often reveal discrepancies between numerical ratings and real experience. Customers give high ratings, but describe problems in the comments - this signals social desirability bias. A systematic analysis of the text can verify that NPS/CSAT methods do not give distorted data and that the reliability of the survey is maintained.

Practical symptoms: selectively showing only "nice" results, no room for conclusions contrary to the thesis, avoiding topics that undermine the effectiveness of projects. Introducing a "devil's advocate" in the results review and a standard reporting template (with sections for positive and negative conclusions) helps reduce the risk. Reducing confirmation bias requires management's permission to discuss uncomfortable insights - a cultural change, not only methodological, but essential to the credibility of the entire VoC program.

Copyright © 2023. YourCX. All rights reserved — Design by Proformat